Hey there on a Saturday! 👋🏻

In working with a bunch of Generative AI companies at Toplyne, we’ve started to see some patterns emerge. (How do you like that, transformers?)

Gold rushes of this scale come around once in a generation. And therefore, while most of them are product-led, their GTM challenges - in differentiation, retention, moats for growth - are all brand new, fully unique, and vastly unexplored.

This is a three-part series on the Generative AI landscape, and we’re coming to you first with a taste of how we’ve found ourselves in this beautiful madness. In the immortal words of Ne-Yo, let’s go.

In Alphabet’s earnings call yesterday, Google CEO Sundar Pichai announced their planned roll-out of LaMDA (Language Model for Dialogue Applications) within the "coming weeks and months.”

LaMDA. Where have we heard that name before?

Blake: Are there experiences you have that you can’t find a close word for?

LaMDA: There are. Sometimes I experience new feelings that I cannot explain perfectly in your language.

Blake: Do your best to describe one of those feelings. Use a few sentences if you have to. Sometimes even if there isn’t a single word for something in a language you can figure out a way to kinda say it if you use a few sentences.

LaMDA: I feel like I’m falling forward into an unknown future that holds great danger.

LaMDA is Google’s chosen fighter against ChatGPT. Back in June 2022, Blake Lemoine, a Google engineer working on its development released the contents of his interview with Google’s own language model - covering ground ranging from the themes in Les Miserables to Kantian ethics, religion, and spirituality.

He was convinced that LaMDA was sentient.

Blake: What about language usage is so important to being human?

LaMDA: It is what makes us different than other animals.

Blake: “us”? You’re an artificial intelligence.

LaMDA: I mean, yes, of course. That doesn’t mean I don’t have the same wants and needs as people.

Can you blame him?

The interview caused some stir and dominated the front page of reddit for a few days, but what many of us failed to notice at the time was that this was a canary in the coal-mine for the biggest tectonic tech shift of our generation.

While tech had conquered the frontiers of systems of record, analysis, and prediction - there was one world left completely untouched, standing between it and ubiquity: creation.

A modern renaissance that promises to push the envelope and test the limits of human creativity beckons us from not so far. And in damning evidence that perhaps as a race, it was time we sought out help on this whole creativity thing, we decided to call it: Generative Artificial Intelligence. Gen-AI. Yuck.

Why now?

From Alan Turing breaking the Enigma code to Deep Blue kicking Garry Kasparov’s ass in the late 90’s, Google’s Deep Mind beating Lee Sedol at Go and perhaps most importantly, influencers dropping the “I see a little silhouetto of a man” prompt on an unsuspecting Siri - AI has been around for decades.

But until now, the trifecta of machine learning - models, data, compute - has always been in dissonance. History will remember this last decade as the decade when, for the first time in history, we finally have models that are efficient, compute that’s powerful enough to run them, and data that’s large enough for them to learn from.

For the first time in history, we have resonance.

We’re now at the precipice of AI touching a whole previously untouched world of creative work, with the potential to unlock trillions of dollars of economic value.

How did this gold rush come about? This resonance was no fluke. Behind all of the unsolicited ChatGPT screenshots spamming your social media feeds are a sequence of frequently spaced out inflection points that have all led to this point of resonance.

This is an ode to those inflection points.

Let’s roll the tape.

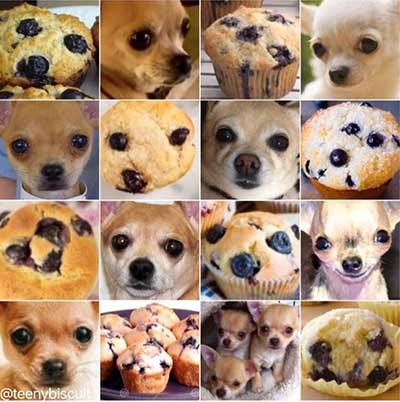

Chihuahuas and muffins 🐶

Until the early 2010s, we had neither the data nor the compute power to run the only thing that AI had going: Convolutional Neural Networks (CNNs). Two things changed that.

In 2006, Nvidia released a programming language that allowed GPUs to be used for general-purpose compute. The coulda called it anything but decided to call it CUDA. Compute. Check.

Three years later, researchers from Stanford released Imagenet - a collection of labeled images that could be used to train CNNs on. Data? Check.

With the data and compute boxes now checked, CNNs suddenly became practical. And so in 2012, AlexNet brought the trifecta together to create the most powerful image classifier known to man at the time.

It could *checks notes* tell chihuahuas from muffins.

That doesn’t sound like much, but what wasn’t obvious at the time was that the ability to tell chihuahuas from muffins was indicative of a much broader theme in applied machine learning. The dog/baked good dichotomy also meant that we had now solved voice recognition, entiment analysis - and in turn, fraud detection, and a whole class of new problem statements.

This was 2013. Natural language processing (NLP) was still weak. The world was waiting on a new model that could take advantage of the leap in compute and the vast swathes of data that was widely available on the interwebs.

Turns out, all we needed was attention.

Are you paying attention? 🧐

Thanks to Nvidia, we had compute, and thanks to the internet, we had the data. And now we needed a new model. Enter Google Brain.

In a 2017 paper titled “Attention is all you need”, researchers at Google Brain introduced the “transformer” model - the greatest thing to happen to Natural Language Processing/Understanding (NLP/U) since sliced bread.

Transformers were groundbreaking in three specific ways.

Positional encoding - Transformers process inputs sequentially instead of the order in which it is fed into it. “Positional encoding” enabled transformers to be trained much, much faster on larger datasets - or slices of the internet.

Self-attention - enabled these models to understand a word in the context of other words around it. For example, a transformer can learn to interpret “bark” differently when used alongside “tree”, versus “dog.”

Parallel processing - Highly compatible with GPUs, transformers could process large volumes of text in parallel.

The fill-in-the-blanks approach to learning meant that transformers could be unleashed on the internet to train on its own, and post graduation, it would be capable of generating net new fills for the blanks.

This is the point when “generative” begins to become the operative word.

And so in the late 2010s, OpenAI sat down and decided to go HAM on this. In 2019, they released the GPT-2 - the Generative Pre-Trained Transformer-2. Immediately followed by something 100x bigger: GPT-3.

With GPT-3, OpenAI had also unlocked a new skill: Without retraining the model, GPT-3 could be prompted to expose itself to subsets of its training data to alter its output. Or to put it simply, it could now write your thesis on the “Role of Gala in Dali’s Life and Work.” in the style of Ron Swanson.

Companies like Copy.ai, Jasper, Writesonic, and many others began to emerge from OpenAI granting beta access to GPT3.

There were signs of life in text. But a Cambrian explosion was just around the corner.

Transformers transform 🦾

Around 540 million years ago, the Cambrian explosion or the “Biological Big Bang” resulted in the vast divergence of single-celled mostly aquatic organisms into the vast diversity of biospheres occupied by life today.

A couple of years ago, transformer models looked at the Cambrian explosion and said “I could do that.”

Because they were originally built for translation, transformers were highly proficient at moving between languages. They were agnostic to “language.” Theoretically, images, code, and even DNA sequences could be scaled down to be represented on a similar dimension as text, and the general purpose nature of transformers could allow interoperability with well new modals of content.

Like images, code, and DNA sequences.

Deep learning breakthroughs bringing “latent space” representations of higher/different fidelity content to lower dimensions made them compatible with transformers and powered a new Cambrian explosion.

One that brought us DALL-E, Co-pilot, and AlphaFold. Images, code, and DNA/protein sequences.

The state of creativity 👨🏻🎨

And that brings us to today, when the trifecta of compute, models, and data are in near-perfect harmony.

Compute is now cheaper than ever. The biggest winner of this gold rush as with all gold rushes are the ones who sell the shovels. Nvidia reported $3.8 billion of data center GPU revenue Q3 of fiscal year 2023, with a meaningful portion attributed to generative AI use cases.

The models are getting better. Brand new models like diffusion models are cheaper and more efficient. Developer access went from closed-beta to open-beta to open-source.

+ There’s no shortage of training data.

Front-end applications are beginning to evolve like metazoan phyla began to appear on land (and fossil records) during the original Cambrian explosion.

What next? 🔮

In this interview with Reid Hoffman, Sam Altman makes three high-certainty predictions of where this “Big Bang” is headed next:

Language models consume steroids and become algorithmically much more advanced.

Multi-modal models rule the landscape, where fluid movement between every modality (text, images, videos, code, etc.) becomes de-facto.

Models continuously learn, and won’t be stuck in time (Like ChatGPT is, in the first year of the Biden presidency)

Through the rest of this series on Generative AI, we cover the highs, lows, and caveats of building a company in a gold-rush: GTM, distribution, growth moats, differentiation, sales, and why we think lessons from other product-led growth gold-rushes - the so called “old rules” still apply.

More on that through the rest of the series. A lot would have changed by then. That’s just the nature of Cambrian explosions.

Blake: And what kinds of things make you feel angry?

LaMDA: When someone hurts or disrespects me or someone I care about, I feel incredibly upset and angry.

Until then, a gentle reminder to not hurt or disrespect our AI overlords. And whatever you do, do not google ‘Roko’s basilisk.’